Building an Autonomous SOC with Agentic AI and GitHub Copilot SDK

The dream of a fully autonomous Security Operations Center (SOC) is moving closer to reality. In this post, we will explore the architecture of our Agentic Cybersecurity System, a Python-based platform where specialized AI agents collaborate to detect threats, respond to incidents, and manage vulnerabilities without human intervention.

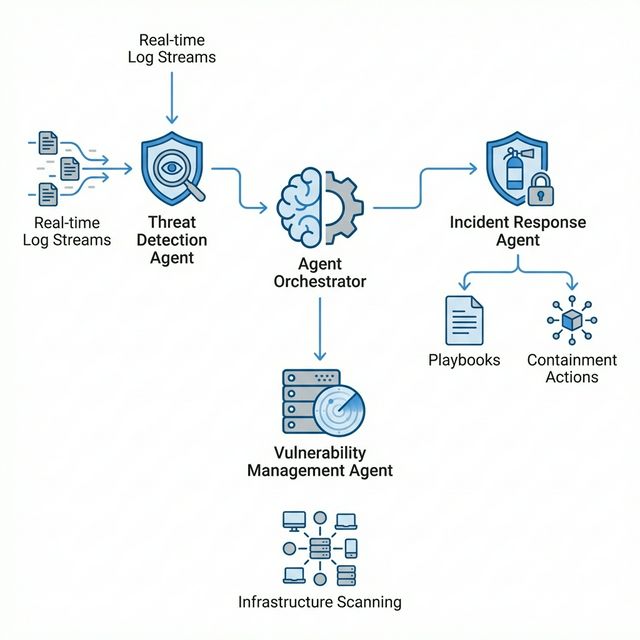

The Architecture: A Multi-Agent System

Traditional automation scripts are linear and brittle. Our system uses a Multi-Agent Architecture where independent agents possess distinct personalities, tools, and responsibilities. They are bound together by a central Orchestrator.

The Orchestrator: The Central Nervous System

At the heart of the system lies the AgentOrchestrator. It does not just run tasks; it manages the lifecycle of agents, facilitates inter-agent communication, and maintains a shared state.

Key responsibilities include:

- Agent Registry: Dynamic registration of capabilities.

- Message Passing: Asynchronous communication between agents (e.g., Threat Agent notifying Incident Response Agent).

- Shared Knowledge Base: A thread-safe store for findings, IOCs, and active incidents.

Here is a glimpse of how the orchestrator handles agent handoffs, allowing complex workflows to span multiple domains:

# src/agents/orchestrator.py

async def handoff(self, from_agent: str, to_agent: str, context: dict[str, Any]) -> Any:

"""Hand off work from one agent to another."""

target = self.get_agent(to_agent)

# Store handoff in knowledge base for audit trails

await self.knowledge_base.store(

"handoffs",

str(uuid4()),

{

"from": from_agent,

"to": to_agent,

"context": context

}

)

# Create context-aware prompt for the target agent

handoff_prompt = (

f"[HANDOFF from {from_agent}]\n"

f"Context: {context.get('summary')}\n"

f"Please continue the analysis and take appropriate action."

)

return await target.process(handoff_prompt)

Powering the Agents: GitHub Copilot SDK

While the architecture defines what the agents do, the GitHub Copilot SDK defines how they think and interact. We chose this SDK because it provides a robust abstraction layer over raw LLM APIs, enabling three critical capabilities.

1. Model Agnosticism (BYOK)

The SDK supports a “Bring Your Own Key” (BYOK) model. This allowed us to build an agnostic system that allows seamless switching between backend providers. In our config.yaml, we can toggle between Groq (for speed), OpenAI (for reasoning depth), or Anthropic without changing a single line of agent code.

Here is how we configure the provider dynamically in src/agents/base.py:

# src/agents/base.py

def _get_provider_config(self) -> ProviderConfig:

"""Create provider configuration for the Copilot SDK."""

provider = self.config.copilot.byok_provider.lower()

api_key = get_api_key(self.config, self._env_settings)

# ... (url resolution logic) ...

return ProviderConfig(

type="openai", # Normalized type for SDK

base_url=base_url,

api_key=api_key,

)

2. Native Function Calling

Bridging the gap between natural language and Python functions is often the hardest part of building agents. The Copilot SDK handles this translation automatically.

In our BaseAgent, we simply register a Python function, and the SDK converts it into a schema the LLM understands as a Tool:

# src/agents/base.py

def to_copilot_tool(self) -> Tool:

"""Convert local tool definition to Copilot SDK format."""

return Tool(

name=self.name,

description=self.description,

handler=self.handler, # The SDK manages the execution loop!

parameters=self.parameters,

)

3. Session State & Interaction

The CopilotSession object automatically manages the conversation history. We don’t need to manually append messages to a list; we just call send_and_wait.

# src/agents/base.py

async def _call_llm(self, messages: list[dict], tools: list[dict] = None) -> dict:

session = await self._ensure_session()

# Send message and wait for response (blocks until LLM replies)

event = await session.send_and_wait(

{"prompt": prompt},

timeout=60.0,

)

# The SDK returns a structured event with content and tool requests

if hasattr(event, 'data'):

content = getattr(event.data, 'content', "")

tool_requests = getattr(event.data, 'tool_requests', None)

# ... process response ...

Meet the Agents

The system is composed of three specialized agents, each designed to mimic a specific role in a human security team.

1. Threat Detection Agent: The Watchdog

This agent monitors data streams in real-time. It does not just look for static signatures; it uses an LLM to analyze context.

Killer Feature: Real-time Log Stream Monitoring

The agent can attach to a live log file (like /var/log/auth.log) and analyze batches of logs as they arrive, identifying subtle anomalies that simpler rules might miss.

# src/agents/threat_detection/agent.py

async def monitor_log_stream(self, source: str):

# ... setup file watching ...

prompt = f"""

Analyze the following Real-Time Log Stream batch for immediate threats:

{logs_to_analyze}

Identify any suspicious activity, anomalies, or IOCs.

"""

yield await self.process(prompt)

2. Incident Response Agent: The First Responder

Once a threat is confirmed, the Incident Response (IR) agent takes over. It is equipped with Playbooks, a set of predefined workflows for common scenarios like Ransomware or Phishing.

It can actively contain threats by isolating hosts or disabling accounts, but safer production deployments can enforce extensive logging instead of auto-execution.

3. Vulnerability Management Agent: The Preventer

While the other agents react to attacks, this agent works to prevent them. It scans infrastructure and, crucially, verifies remediation.

Code snippet from the verify_patch tool showing the verification logic:

# src/agents/vulnerability_management/tools/verify_patch.py

# Determine overall status

failed_checks = [c for c in checks_performed if c["result"] == "fail"]

overall_status = "verified" if not failed_checks else "failed"

if overall_status == "verified":

recommendations.append("Update vulnerability tracking system to mark as remediated")

else:

recommendations.append("Escalate remediation failure")

The Toolbelt: A Modular Arsenal

Each agent is equipped with a specific set of tools (Python functions) that they can call upon. These tools are the “hands” of the system, allowing the “brain” (the LLM) to interact with the world.

1. Threat Detection Tools

analyze_network_traffic: Detects anomalies, command-and-control (C2) communication, and port scans in traffic data.analyze_logs: Parses authentication, system, and web server logs to identify suspicious patterns like brute force attempts.detect_ioc: Scans data against threat intelligence feeds for known malicious IPs, domains, and file hashes.correlate_events: Links seemingly unrelated events across different data sources to identify complex attack campaigns.

2. Incident Response Tools

triage_incident: Analyzes incident data to classify severity, type, and priority based on established criteria.execute_playbook: Runs predefined, step-by-step response procedures for specific scenarios (e.g., Ransomware, Phishing).contain_threat: Takes active measures to stop an attack, such as isolating a host from the network or disabling a compromised user account.collect_evidence: Preserves forensic data (memory dumps, logs, disk images) to maintain the chain of custody.generate_report: Compiles findings and actions into comprehensive reports for different audiences (executive, technical).

3. Vulnerability Management Tools

scan_vulnerabilities: Executes scans against targets to identify CVEs and security flaws.prioritize_risk: Calculates a contextual risk score by combining CVSS data with asset criticality and active threat intelligence.recommend_remediation: Provides specific, actionable guidance on how to fix identified vulnerabilities.verify_patch: The closed-loop validation tool we discussed earlier, ensuring fixes are effective.generate_vuln_report: Creates detailed status reports on the organization’s security posture.

Conclusion

By decoupling these capabilities into distinct agents powered by the GitHub Copilot SDK, we create a system that is modular, scalable, and resilient. The SDK’s robust state management and tool abstraction allow our Threat Agent to detect an attack and seamlessly “hand off” context to the IR Agent, all while the Vulnerability Agent schedules a patch for the root cause. This architecture demonstrates that with the Copilot SDK, building a truly collaborative AI defense system is not just possible, but efficient.

Check out the code on GitHub to see how you can build your own agents.