Persistent Memory for Agentic Systems with Hindsight

One of the most persistent challenges when designing production-grade agentic AI systems is amnesia. AI agents naturally forget everything between sessions. Every conversation or execution starts entirely from zero context about previous incidents, learned patterns, or institutional knowledge. This fundamentally limits the real-world value agents can deliver in complex environments like cybersecurity, customer support, or DevOps.

In this post, let me explain the importance of memory management in agentic systems, distinguish between short-term and long-term memory, and introduce Hindsight, a purpose-built memory system that gives AI agents durable, persistent intelligence. We will also walk through a hands-on project demonstrating Hindsight integrated with LangChain/LangGraph.

Why Memory Management Matters in Agentic AI

Agentic AI systems are designed to operate autonomously, making decisions, executing tools, and interacting with the environment over time. Without effective memory management, agents are restricted to reacting to immediate stimuli.

When agents possess memory, they transform from stateless reactive scripts into intelligent systems capable of continuous learning. They can recognize recurring problems, adapt to user preferences, avoid repeating past mistakes, and construct a long-term understanding of their domain. Memory is the bridge between a simple automation script and true autonomous intelligence.

Short-Term Memory vs. Long-Term Memory

To build effective AI agents, we must architect both short-term and long-term memory.

Short-Term Memory

Short-term memory acts as the agent’s “working context” or scratchpad. It retains the immediate conversational history, interim thought processes, and transient tool outputs within a single execution thread or session.

- Mechanism: Typically implemented by appending messages to the LLM’s context window (e.g., LangChain’s

ConversationBufferMemoryor thread-level check-pointing). - Limitation: It is ephemeral. Once the session ends or the context window limit is reached, the knowledge is lost. It cannot be used to learn across multiple distinct tasks over weeks or months.

Long-Term Memory

Long-term memory is the persistent, durable knowledge base of the agent. It stores facts, historical observations, past successful remediations, and user behaviors across entirely separate sessions and workflows.

- Mechanism: Implemented using external vector databases, knowledge graphs, or specialized memory stores where information is consolidated, indexed, and retrieved on demand.

- Advantage: It allows agents to accumulate wisdom over time, share knowledge across different agents, and handle tasks requiring historical context.

Introducing Hindsight

Hindsight provides a purpose-built, long-term memory system specifically designed for AI agents. Rather than just dumping embeddings into a vector store and hoping for the best, Hindsight structures memory accumulation through three core capabilities:

retain(): Allows agents to explicitly store facts, experiences, and observations into their memory bank.recall(): Enables multi-strategy retrieval (TEMPR) so agents can actively search their memory for relevant historical facts.reflect(): Synthesizes contextual, disposition-aware responses directly from accumulated memory.

The Benefits of Hindsight

What makes Hindsight powerful is Observation Consolidation. When agents record overlapping or related facts, Hindsight automatically merges these raw facts into durable, evidence-grounded beliefs. It builds an Entity Graph under the hood, mapping relationships between concepts (like a specific service, a recurring error, and the proven fix).

Additionally, Hindsight supports Per-Agent Banks. You can configure isolated memory banks tailored to specific agent personas. An incident triage agent builds different expertise compared to a remediation agent, and Hindsight keeps their knowledge structured according to their unique “Bank Missions”.

Walkthrough: DevOps Incident Response Demo

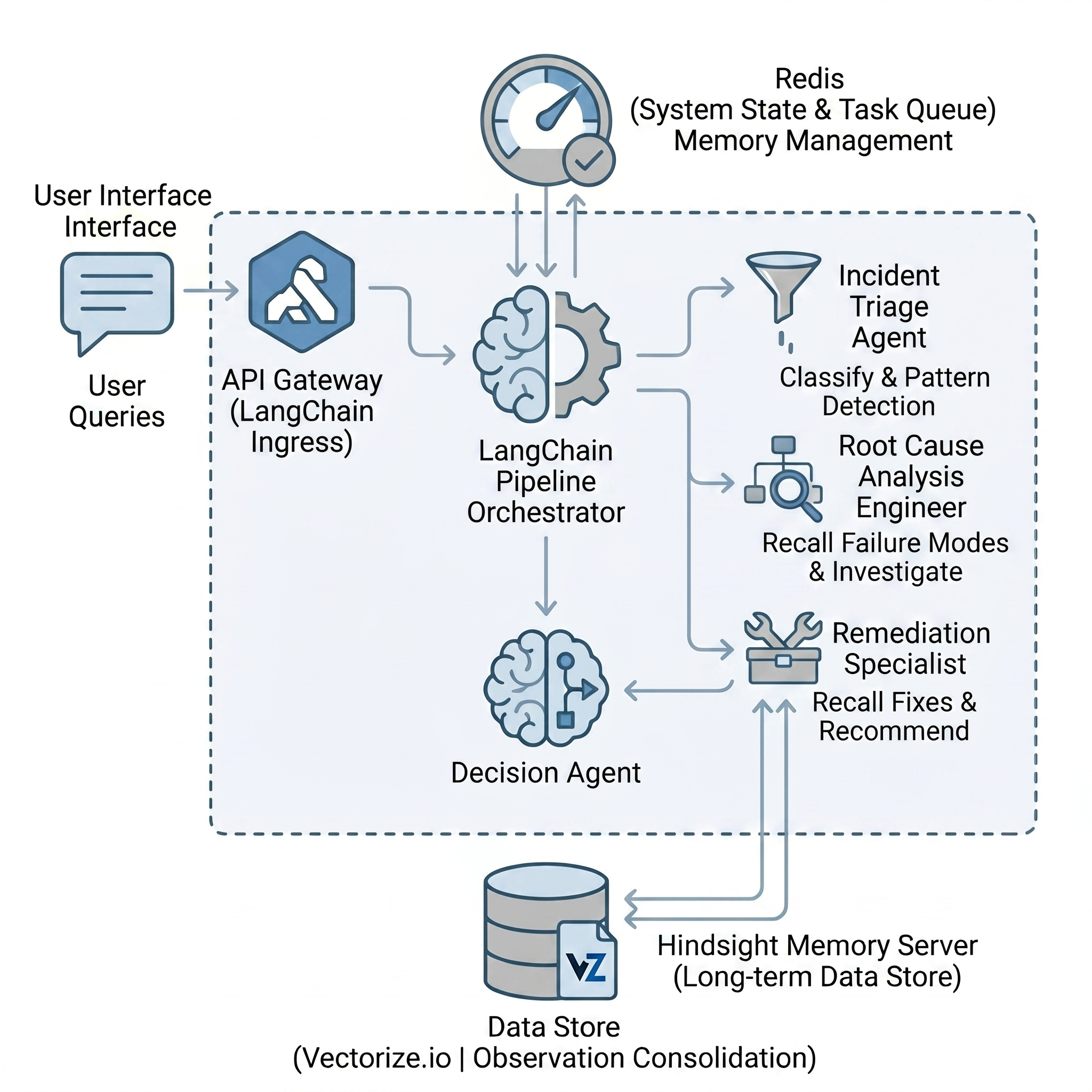

To demonstrate this, I have built a demo project integrating LangChain/LangGraph with Hindsight to simulate a DevOps Incident Response pipeline.

You can find the complete source code on GitHub.

The Scenario

The pipeline consists of three specialized LangGraph ReAct agents, each equipped with its own Hindsight memory bank:

- Incident Triage Analyst: Classifies the severity of incoming alerts and detects recurring patterns (

devops-incident-agent-triage). - Root Cause Analysis Engineer: Investigates the root causes by recalling known failure modes (

devops-incident-agent-rca). - Remediation Specialist: Proposes fixes, remembering exactly what solutions worked for similar issues in the past (

devops-incident-agent-remediation).

Memory Accumulation in Action

When we run the pipeline across multiple simulated incidents, the power of Hindsight becomes obvious:

- Run 1 (API Latency Spike): The agents start with empty memory banks. They investigate from scratch, eventually diagnosing a database connection pool exhaustion. Crucially, they use the

retain()tool to record these findings into Hindsight. - Run 2 (DB Connection Pool Exhausted): A similar alert arrives. The agents use the

recall()tool, surfacing the findings from Run 1. This drastically accelerates the diagnosis, skipping redundant investigations. - Run 3 (Recurring Latency Spike): The agents immediately recognize the recurring pattern. They recommend proven fixes (like tuning PgBouncer) based on accumulated history.

The Code: Implementing Hindsight Tools

The project uses an abstract LLM factory (llm_provider.py), allowing you to easily switch between OpenAI, Groq, or local Ollama models. To give our LangGraph agents memory, we wrap the hindsight_client Python SDK into LangChain @tool decorators.

Here is how we implement the three core memory functions as factory functions that bind to a specific agent’s bank_id:

1. Retaining Knowledge (retain)

When an agent discovers a root cause or a successful fix, it calls this tool to write the findings into its specific memory bank.

def make_retain_tool(bank_id: str):

@tool(f"hindsight_retain_{bank_id}")

def hindsight_retain(content: str) -> str:

"""Store important findings, conclusions, or lessons learned into

long-term memory so they can be recalled in future incidents."""

try:

with _get_client() as client:

client.retain(

bank_id=bank_id,

content=content,

context="devops incident response",

)

return f"Successfully stored to memory bank '{bank_id}'."

except Exception as e:

return f"Failed to store memory ({e})."

return hindsight_retain

2. Recalling Raw Facts (recall)

When an agent starts investigating an incident, it can search its memory bank for relevant historical facts.

def make_recall_tool(bank_id: str):

@tool(f"hindsight_recall_{bank_id}")

def hindsight_recall(query: str) -> str:

"""Search long-term memory for raw facts, observations, and past knowledge

related to the query. Returns individual memory entries ranked by relevance."""

try:

with _get_client() as client:

response = client.recall(

bank_id=bank_id,

query=query,

budget="mid",

max_tokens=4096,

)

if not response.results:

return "No memories found for this query."

lines = []

for r in response.results:

mem_type = getattr(r, "type", "unknown")

lines.append(f"- [{mem_type}] {r.text}")

return "\n".join(lines)

except Exception as e:

return f"Memory search unavailable ({e})."

return hindsight_recall

3. Synthesizing Context (reflect)

Instead of just returning raw search results, reflect asks Hindsight to synthesize a contextual, disposition-aware answer drawing on all relevant memories.

def make_reflect_tool(bank_id: str, context: str | None = None):

@tool(f"hindsight_reflect_{bank_id}")

def hindsight_reflect(query: str) -> str:

"""Query long-term memory for synthesized knowledge about past incidents,

root causes, or remediation outcomes. Use this BEFORE investigating to

check if similar issues have been seen before."""

try:

with _get_client() as client:

response = client.reflect(

bank_id=bank_id,

query=query,

budget="mid",

context=context,

)

result = response.text if hasattr(response, "text") else str(response)

if not result or result.strip() == "":

return (

"No relevant memories found. This appears to be a new type "

"of incident. Proceed with a fresh investigation."

)

return result

except Exception as e:

return (

f"Memory recall unavailable ({e}). "

"Proceed with a fresh investigation based on the incident details."

)

return hindsight_reflect

By wrapping these methods as LangChain tools, the ReAct agents can autonomously decide when to search their memory for context and when to save new findings for the future.

By spinning up the Hindsight server locally using Docker Compose, we can dive into the Control Plane Web UI to visually explore the Memory Banks, track how individual observations are consolidated, and inspect the underlying Entity Graph.

Conclusion

Agentic systems cannot reach their full potential without persistent memory. By moving beyond simple short-term context windows and integrating robust long-term memory solutions like Hindsight, we can build agents that truly learn, adapt, and compound their value over time.

Check out the complete code on GitHub to see how you can equip your own agents with durable memory.

I highly encourage you to explore the concept, run the multi-agent pipeline, and watch the agents build their expertise from one incident to the next! Let me know in the comments if you have any thoughts on managing memory in agentic AI.